Understanding Reinforcement Learning

Introduction to Reinforcement Learning

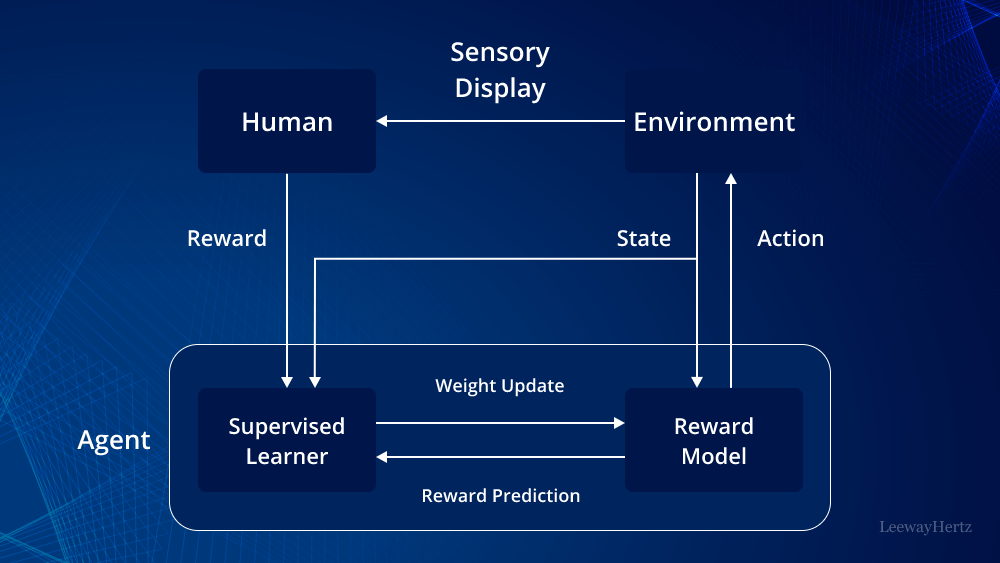

Reinforcement learning (RL) is a branch of machine learning where an agent learns to make decisions by interacting with its environment. The agent receives feedback in the form of rewards or penalties based on its actions, and it aims to maximise the cumulative reward over time.

The Basics of Reinforcement Learning

At the core of reinforcement learning is the concept of trial and error. An RL agent explores its environment, trying different actions and observing the outcomes. Over time, it learns which actions yield the most favourable results.

- Agent: The learner or decision-maker.

- Environment: Everything that the agent interacts with.

- Actions: The set of all possible moves an agent can make.

- Rewards: Feedback from the environment used to evaluate actions.

- Policy: A strategy that defines the action an agent will take in a given state.

The Role of Exploration and Exploitation

A key challenge in reinforcement learning is balancing exploration (trying new actions) and exploitation (choosing known rewarding actions). Effective RL strategies find a balance between these two approaches to optimise long-term rewards.

Mastering Reinforcement Learning: 8 Essential Tips for Success

- Understand the basics of reinforcement learning algorithms such as Q-learning and policy gradients.

- Choose appropriate reward functions to guide the learning process effectively.

- Explore different exploration-exploitation strategies like epsilon-greedy or Thompson sampling.

- Consider using deep reinforcement learning for complex problems with large state spaces.

- Tune hyperparameters carefully to ensure optimal performance of the RL agent.

- Monitor and analyse the learning process to identify areas for improvement or fine-tuning.

- Be patient as training RL models can take time, especially for more challenging tasks.

- Stay updated with the latest research and advancements in reinforcement learning techniques.

Understand the basics of reinforcement learning algorithms such as Q-learning and policy gradients.

To excel in reinforcement learning, it is crucial to grasp the fundamentals of key algorithms like Q-learning and policy gradients. Q-learning is a model-free algorithm that enables an agent to make decisions based on estimating the value of taking a particular action in a given state. On the other hand, policy gradients focus on directly learning the optimal policy for decision-making tasks. Understanding these algorithms provides a solid foundation for implementing effective reinforcement learning strategies and achieving successful outcomes in training intelligent agents.

Choose appropriate reward functions to guide the learning process effectively.

In the realm of reinforcement learning, selecting suitable reward functions is crucial in steering the learning process towards desired outcomes. The reward function serves as the compass for the agent, guiding it towards actions that lead to positive outcomes and away from those that result in negative consequences. By choosing appropriate reward functions thoughtfully, we can shape the behaviour of the agent and encourage it to learn optimal strategies efficiently. Careful consideration of reward design is paramount in ensuring that the reinforcement learning process aligns with our objectives and yields successful results.

Explore different exploration-exploitation strategies like epsilon-greedy or Thompson sampling.

To enhance your understanding and proficiency in reinforcement learning, it is beneficial to explore various exploration-exploitation strategies such as epsilon-greedy or Thompson sampling. These strategies play a crucial role in balancing the agent’s decision-making process between exploring new actions and exploiting known rewarding actions. By delving into different approaches like epsilon-greedy, which involves a balance between exploration and exploitation based on a predefined parameter, or Thompson sampling, a probabilistic method that considers uncertainty in decision-making, you can broaden your knowledge and improve the efficiency of your reinforcement learning algorithms.

Consider using deep reinforcement learning for complex problems with large state spaces.

When tackling complex problems with vast state spaces, it is advisable to explore the realm of deep reinforcement learning. Deep reinforcement learning leverages neural networks to handle intricate relationships within the data, making it particularly effective in scenarios where traditional reinforcement learning methods may struggle to scale. By utilising deep reinforcement learning techniques, practitioners can navigate through extensive state spaces more efficiently and uncover optimal solutions in challenging environments.

Tune hyperparameters carefully to ensure optimal performance of the RL agent.

When delving into reinforcement learning, it is crucial to meticulously tune hyperparameters to guarantee the optimal performance of the RL agent. Hyperparameters play a pivotal role in shaping the behaviour and learning process of the agent, influencing its ability to make effective decisions within its environment. By carefully adjusting these parameters, such as learning rates or exploration policies, one can fine-tune the agent’s performance, striking a balance between exploration and exploitation to maximise long-term rewards. Thoughtful hyperparameter tuning is a fundamental aspect of enhancing the efficiency and effectiveness of reinforcement learning algorithms.

Monitor and analyse the learning process to identify areas for improvement or fine-tuning.

Monitoring and analysing the learning process in reinforcement learning is crucial for identifying areas that require improvement or fine-tuning. By closely observing how the agent interacts with its environment and the outcomes of its actions, we can gain valuable insights into its performance. This data-driven approach allows us to pinpoint weaknesses, adjust strategies, and enhance the agent’s decision-making capabilities. Continuous monitoring and analysis not only help in improving the overall learning efficiency but also contribute to refining the agent’s behaviour for optimal results in complex environments.

Be patient as training RL models can take time, especially for more challenging tasks.

When delving into reinforcement learning, it is essential to maintain patience throughout the training process. Developing RL models can be a time-consuming endeavour, particularly when tackling complex tasks that require extensive learning and iteration. By embracing patience and allowing the models sufficient time to learn and adapt, one can enhance the effectiveness and robustness of the trained agents, ultimately leading to more successful outcomes in challenging scenarios.

Stay updated with the latest research and advancements in reinforcement learning techniques.

To excel in reinforcement learning, it is crucial to stay informed about the latest research and advancements in techniques within the field. By keeping abreast of new developments, methodologies, and best practices, practitioners can enhance their understanding and application of reinforcement learning principles. Continuous learning and adaptation to evolving strategies ensure that individuals remain at the forefront of this dynamic and rapidly evolving discipline.